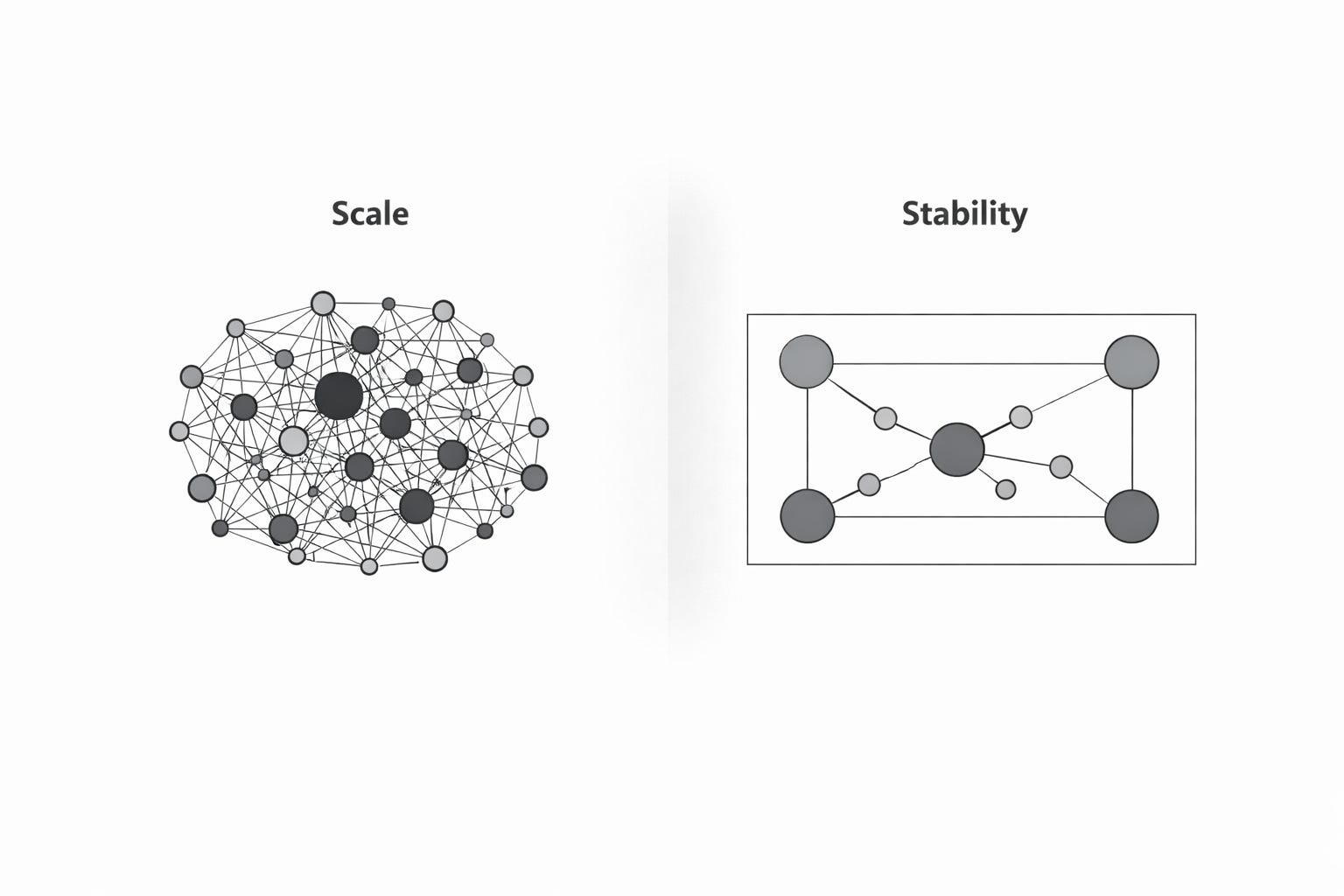

For a long time, scale was the hard problem.

How many users could a system support?

How many requests could it handle?

How quickly could it respond?

Most of modern computing is a story of answering those questions. We built layers of abstraction, automation, and redundancy to push scale further and further out of the way.

And largely, it worked.

But by the time systems reach real scale, the failure mode is no longer throughput or efficiency. It’s coherence.

Control doesn’t disappear.

It fragments; into workflows, approvals, priors, dashboards, and informal human backstops that hold just long enough to mask instability.

At that point, scale is no longer the constraint shaping system behavior.

Stability is.

Scale was a solvable problem

Scale yields to replication.

If a system can do something once, it can usually be made to do it many times by:

parallelizing work

caching results

distributing load

removing unnecessary coordination

These techniques work because scale problems are quantitative. They can be measured, optimized, and amortized.

Stability problems are different.

They are qualitative.

They emerge from interaction, timing, and dependency. They show up not as overload, but as drift. Not as failure, but as brittleness. Not as crashes, but as systems behaving plausibly wrong at speed.

You can scale a system long past the point where it remains governable.

What breaks at scale isn’t performance, it’s alignment

When systems were smaller, authority was implicit.

Decisions happened close to their consequences. Context was shared. Oversight was direct. When something went wrong, responsibility was legible.

As systems grow:

authority fragments

context thins

decisions become asynchronous

consequences propagate beyond visibility

None of this is a bug. It’s the natural result of distribution.

The mistake is assuming that techniques designed to manage load will also manage alignment.

They won’t.

You can add more humans, more reviews, more process, and still end up with a system that behaves coherently most of the time, but fails in ways that are hard to predict, harder to trace, and almost impossible to intervene in once underway.

That is not a scale failure.

It is a stability failure.

Stability is not the absence of failure

In traditional engineering, stability is often framed as resilience: the ability to recover after something goes wrong.

That definition is insufficient here.

In autonomous and semi-autonomous systems, stability is about preventing certain failure modes from becoming representable in the first place.

A stable system is not one that reacts well to error.

It is one where classes of error are structurally bounded.

This is why adding oversight rarely restores control. Oversight observes behavior. Stability shapes what behavior is possible.

When stability is missing, systems don’t necessarily fail loudly. They fail quietly, through compounding small decisions that individually look reasonable, but collectively drift outside intent.

Why scale amplifies instability

At low volume, humans compensate for instability without realizing it.

They notice edge cases.

They resolve ambiguity informally.

They absorb mismatches between intent and execution.

At scale, those compensations stop working.

Not because people are careless, but because:

decisions happen too fast

there are too many of them

the cost of intervening becomes asymmetric

Human judgment doesn’t disappear. It becomes downstream.

By the time someone is aware a system is behaving incorrectly, the behavior has already propagated. Control becomes retrospective. Intervention becomes symbolic.

This is why systems can appear safe right up until they aren’t.

The architectural shift

When scale was the constraint, the goal was expansion.

When stability becomes the constraint, the goal changes.

The question is no longer:

“How do we let the system do more?”

It becomes:

“Under what conditions is the system allowed to act at all?”

That is an architectural question.

It cannot be answered by policy alone.

It cannot be answered by monitoring alone.

It cannot be answered by inserting humans into the flow.

It requires making authority explicit. Encoding it into the structure of the system so that decisions are evaluated before execution, not explained afterward.

This is the point where architecture stops being an implementation detail and becomes the primary control surface.

Scale optimized systems; stability governs them

Most existing systems were designed to scale first and govern later.

That ordering made sense when humans remained the primary decision-makers and software acted in bounded, predictable ways.

It breaks down when systems:

act continuously

coordinate with other systems

operate across organizational boundaries

make decisions faster than humans can follow

At that point, governance cannot be layered on top.

It has to be structural.

Stability becomes the limiting factor not because systems are fragile, but because unchecked autonomy is efficient at finding the edges of what has not been designed.

What comes next

So far, this series has established three things:

Autonomy predates AI.

Hierarchy no longer governs.

Human-in-the-loop does not restore control.

This article introduces the reframing that follows:

Stability, not scale, is now the defining constraint of intelligent systems.

In the next article, we’ll examine why architecture; not culture, process, or policy, has become the primary mechanism for achieving stability, and what it means to design systems where autonomy is expected rather than feared.

Once scale is no longer the goal, architecture has to do different work.

And that work has only just begun.

Next in the series: Representation vs Interaction

I never thought about the impact of stability/resilience from that perspective. Especially in an autonomous age. Always be learning. Thanks for the software update! 🧠