From Orchestration to Authority

The Evolution of Automation. | A companion to Articles 5–7

Editor's note: This companion paper expands the structural argument made in the Evolution of Automation talk. It is designed to be read first. The talk, embedded at the end, provides the compressed live version of the same thinking. Together they cover the transition from orchestration to intent-based supervision in more depth than either does alone.

The problem with execution-first thinking

Automation did not begin with AI. It began with scripts. Then workflows. Then orchestration engines. For most of the last two decades, those tools were sufficient because the systems they governed were fundamentally predictable. A trigger entered the system, a predefined sequence executed, an output appeared.

The problem was never execution. We became very good at encoding execution paths. The problem was the assumption underneath them: that the path could always be known in advance.

As Article 5: Representation vs Interaction of this series argues, failure in complex systems does not emerge from cognition. It emerges from interaction. And the failure mode that orchestration is least equipped to handle is the one that appears the moment two valid, independently-reasonable decisions arrive at the same point without a mechanism to arbitrate between them.

Automation is control of steps. Autonomy is control of authority.

This distinction is the axis on which the entire argument turns. When systems interpret intent rather than execute scripts, the relevant engineering question is no longer “what happens next?” It becomes “who is allowed to decide what happens next?” That is an authority question, not an execution question. And it requires a different kind of architecture to answer it.

How complexity breaks deterministic automation

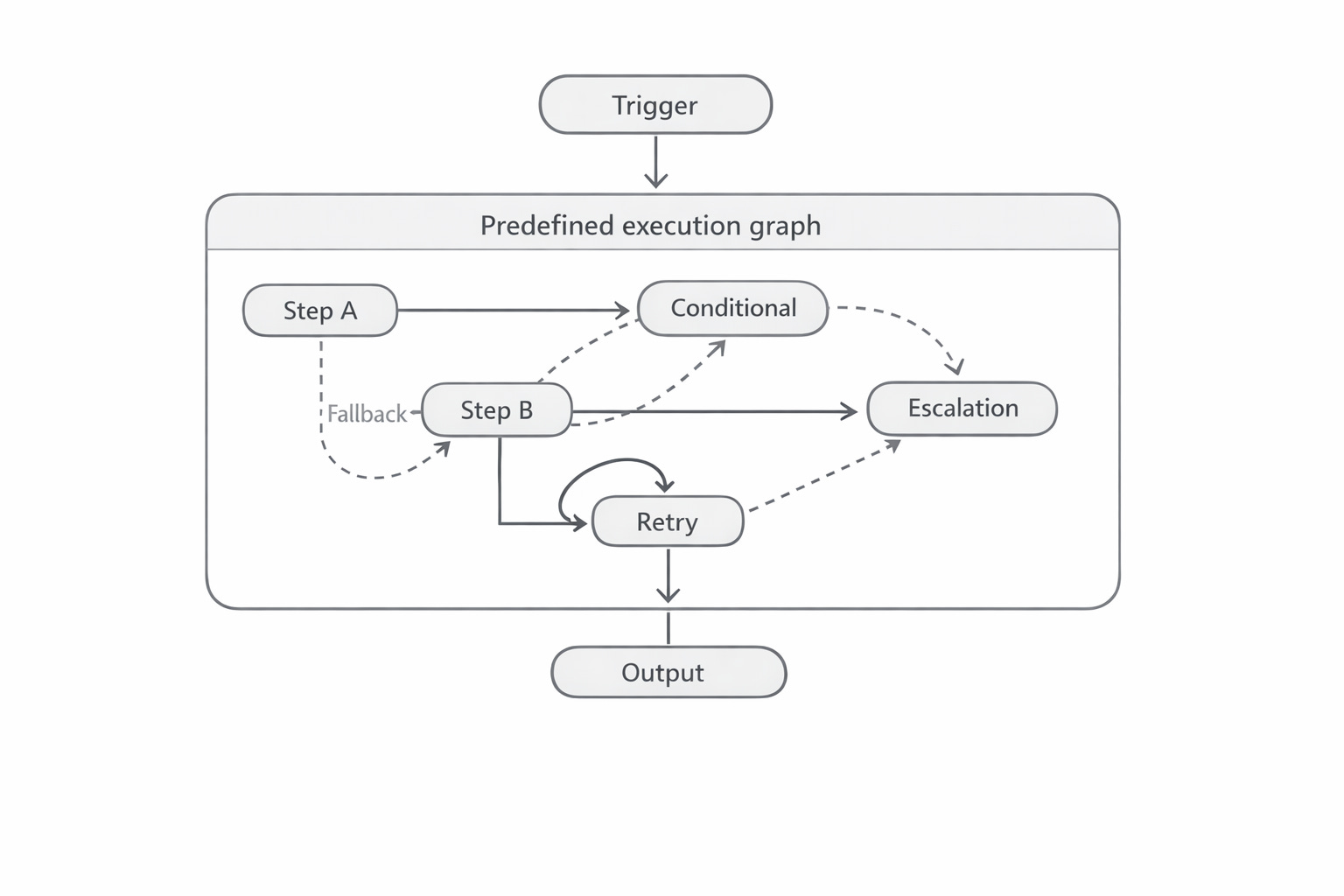

Deterministic automation has a structural elegance: explicit control flow, known state transitions, human-defined sequencing. When something fails, the system retries or escalates. The path graph is static. Workers do not decide. They execute.

This model breaks not because it is poorly designed, but because it rests on a condition that real systems routinely violate: the ability to predict the path in advance.

The moment variability enters the system, conditionals multiply. Retries layer on top of retries. Fallback paths branch from fallback paths. The graph that was once a clean sequence becomes a sprawling tangle of predefined transitions, each individually rational, collectively unmanageable. The system is still deterministic. But it is no longer tractable.

Crucially, even the most complex deterministic system does not reason. It does not interpret intent. It does not adapt at runtime. It executes an increasingly elaborate set of rules that someone encoded in advance. And as uncertainty increases, the number of rules required grows combinatorially. At some scale, prediction becomes the bottleneck — not performance, not throughput, but the fundamental inability to anticipate every path the world will present.

Figure 1: Complexity accumulates as conditional branches, retries, and fallback paths proliferate. The structure is still deterministic. It is no longer predictable.

The inflection: systems that interpret intent

Large language models introduced something that orchestration could not provide: systems capable of reasoning about goals and constraints rather than executing predefined transitions. This is not a quantitative shift (faster automation, cheaper automation). It is a qualitative one.

Agentic systems can evaluate context. They can interpret objectives. They can adapt decisions at runtime in response to conditions they were never explicitly programmed to handle. This becomes critical in exactly the situations where deterministic automation fails: complex, context-rich tasks where the path cannot be fully known in advance.

But interpretation changes the constraint. The question is no longer how to encode the correct sequence. It is how to govern a system that is now capable of choosing its own sequence. That requires addressing something orchestration never had to: authority.

Autonomy does not break when agents are unintelligent. It breaks when authority is undefined.

A case study in undefined authority

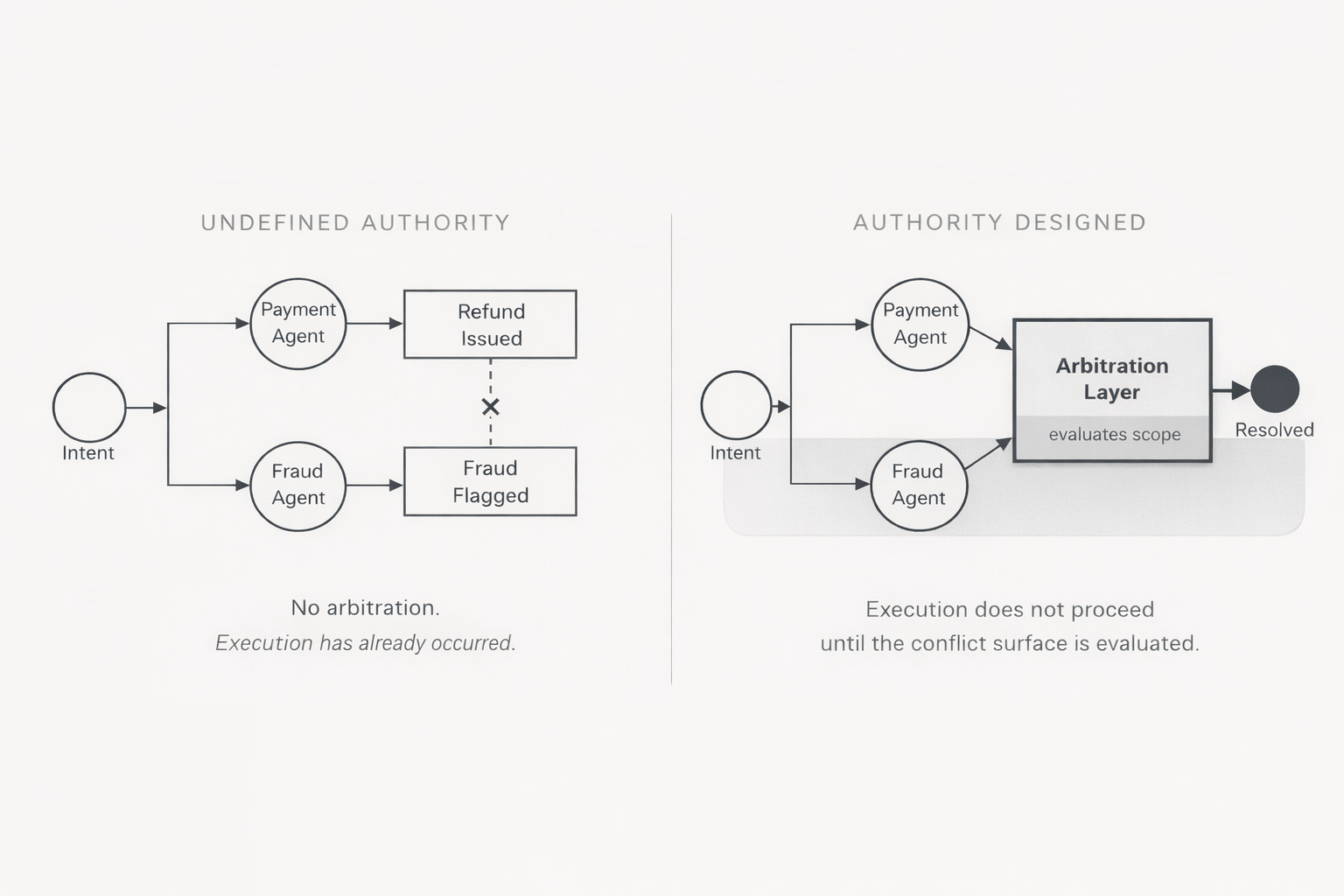

Consider a concrete scenario. A customer submits a refund request. An LLM interprets the intent, routes to a payment agent, and the refund is issued. In parallel, a fraud detection agent evaluates the same transaction and flags an anomaly. Two agents. Two valid decisions. No mechanism to arbitrate between them.

This scenario is worth examining carefully because it is not exotic. It is the normal operating condition of any system in which multiple autonomous components act on overlapping data with different objectives. The payment agent is not broken. The fraud agent is not broken. Both behaved exactly as designed. The failure was in the authority structure that surrounded them.

What undefined authority actually means

The absence of authority design in this scenario produces five specific failure modes, each compounding the others.

No arbitration layer. There is no component whose job is to resolve conflict between the payment decision and the fraud flag. Both decisions exist simultaneously with no mechanism to determine which takes precedence.

Ambiguous escalation. Even if a human is notified, the notification carries no authority structure. Who reviews it? Under what criteria? With what override rights? The escalation is a signal without a receiver.

Retrospective accountability. The refund has already been issued before the fraud flag arrives. Whatever resolution occurs now is remediation, not governance. Control became retrospective at the moment execution outpaced arbitration.

Diffuse responsibility. Both agents can point to their individual decision as correct. No component owns the outcome. Accountability dissolves into the interaction between them.

Invisible coupling. Payment and fraud are implicitly coupled through the transaction, but that coupling was never made explicit in the architecture. The interaction happened; the architecture did not model it.

What authority design changes

A well-architected version of the same system would not eliminate the possibility of conflict. It would define in advance what happens when conflict occurs.

The arbitration layer; the component that mediates between payment and fraud decisions, exists not as an emergency intervention, but as a designed execution path. It knows: which signal takes precedence under which conditions, what threshold triggers escalation versus automatic resolution, which actions require human review before execution, and where execution must stop by default if neither condition is satisfied.

This is what Article 6: Bounded Interaction, calls a bounded interaction surface. The payment agent and the fraud agent do not interact directly. They interact through a mediation point that was designed to absorb exactly this kind of conflict. The boundary does not prevent the agents from acting. It shapes the conditions under which they are allowed to act.

Figure 2: Authority design inserts an arbitration layer between conflicting agents. Execution does not proceed until the conflict surface has been evaluated.

From orchestrator to supervisor

The orchestrator and the supervisor are not the same thing, and conflating them is one of the most common errors in agentic system design.

An orchestrator begins with an action. An event occurs, a workflow activates, control flows downward through a predefined execution graph. The orchestrator decides what runs, in what order, under what conditions. Workers do not decide. They execute. The orchestrator is concerned with topology: how components are wired.

A supervisor begins with intent. It does not micromanage execution steps. It interprets goals, evaluates capability, and assigns authority. Control does not flow through every step. It flows through boundaries. The supervisor is concerned with governance: who is allowed to decide.

This transition from topology to governance is the architectural shift that agentic systems require. Once systems can interpret intent, the bottleneck is no longer execution sequencing. It is authority allocation. And authority allocation cannot be handled by an orchestrator whose fundamental model assumes the path is known in advance.

The orchestrator controls the execution graph. The supervisor controls decision authority. These are not the same layer.

Autonomy as architecture, not permission

There is a persistent misunderstanding about what autonomy means in the context of agentic systems. It is frequently treated as a spectrum of permission: agents with more autonomy are agents with fewer constraints. This framing is precisely backwards.

Autonomy without architecture is not intelligence. It is loss of control. The relevant property of a well-designed autonomous system is not how free its components are to act. It is how precisely their authority has been bounded.

Bounded authority means every agent and supervisor knows three things: what it is permitted to do, what it is not permitted to do, and when escalation is required. These are not runtime decisions. They are design decisions, encoded before execution begins.

This is the distinction that Article 7: When Boundaries Must Decide, develops: the moment a boundary is crossed is an operational moment, but what happens at that moment must have been an architectural decision. A boundary that has not been designed to enforce itself is not a boundary. It is an expectation. And expectations do not stabilize autonomous systems at scale.

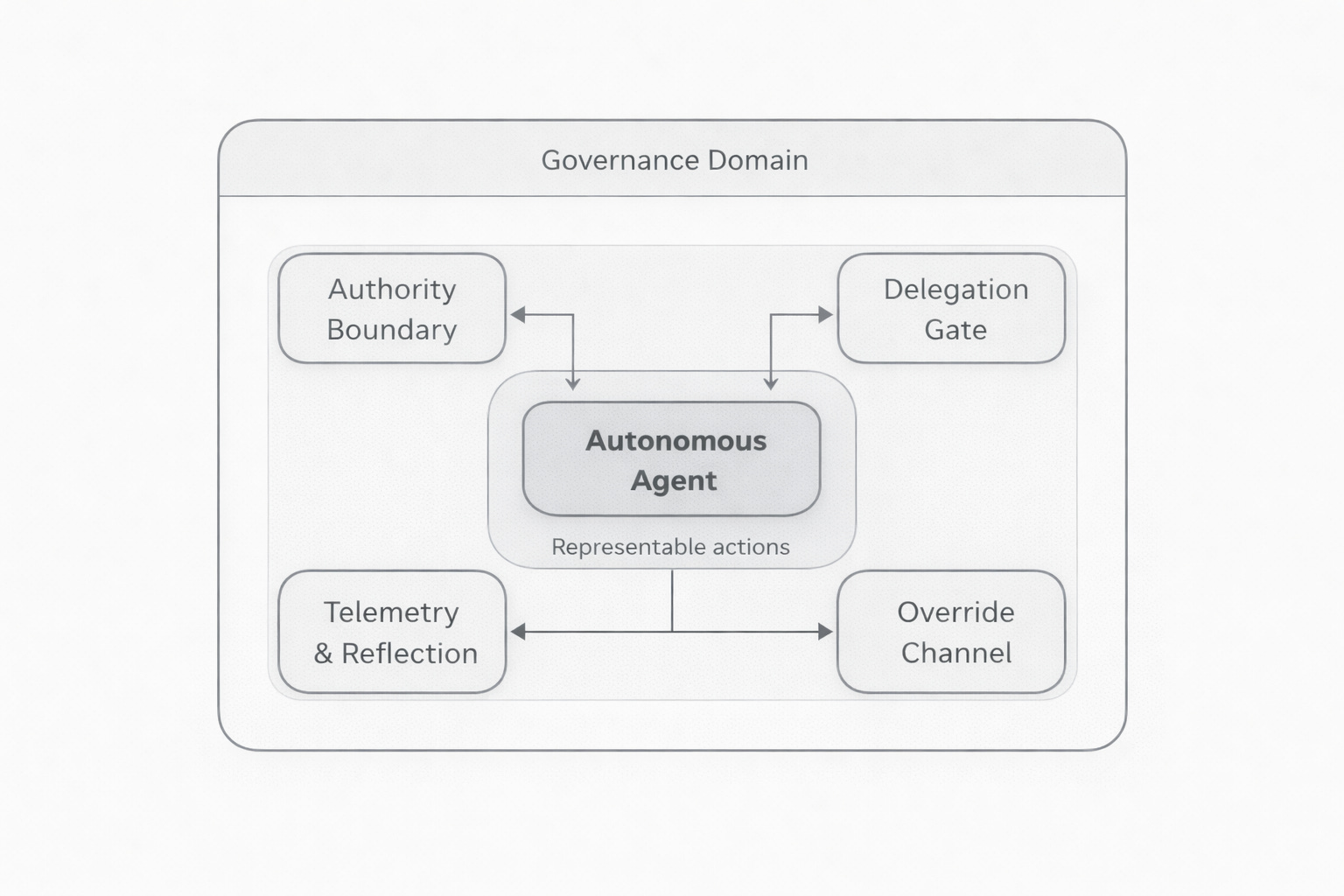

Autonomy is stabilized through four structural properties, each of which must be designed, not assumed.

Bounded authority: Agents are issued scope. They are not given freedom. The scope defines what actions are representable in their execution context.

Explicit delegation roles: Just because an agent can do something does not mean it has been granted authority to do it. Delegation is a decision, not a side effect of capability.

Defined override paths: Human intervention must be designed, not improvised. Escalation is a known pathway, not an emergency reaction. A system that can only be stopped by surprise has not been architected for override.

Structured telemetry: Autonomy requires observability. Telemetry is not logging; it is feedback for evolution. A system that cannot measure its own decision patterns cannot be governed over time.

Figure 3: Autonomy is not a degree of freedom. It is a set of structural properties: bounded authority, explicit delegation, designed override, and observable telemetry.

Control surfaces: where authority enters and is constrained

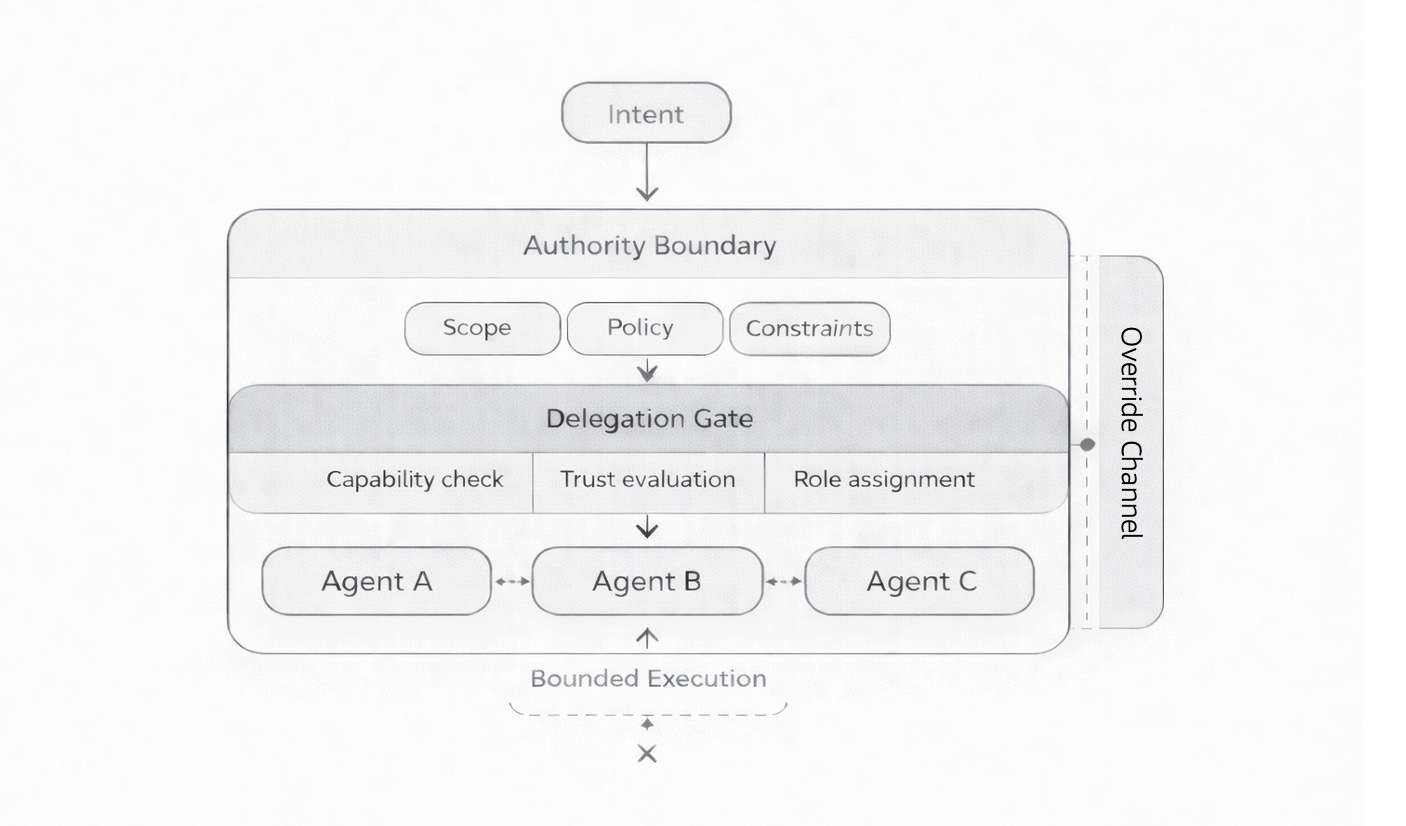

When the argument shifts from execution architecture to governance architecture, the relevant design question changes. It is no longer “how are components wired?” It is “where does authority enter, flow, and get constrained?”

In an autonomous system, authority does not simply exist. It enters the system at a specific point. It flows through defined structures. It is constrained at explicit boundaries. It can be interrupted at designed override channels. It must be observed continuously. Those points are control surfaces, and they are where governance architecture lives.

Intent intake

Intent is not execution. It is a claim on authority. The moment a goal is introduced into a system, a chain of authority allocations begins. What gets done, by whom, under what constraints; all of this flows from the interpretation of intent at the intake point. If intent is ambiguous, authority becomes ambiguous downstream. If intent is precise, boundaries can be set precisely.

Authority boundary

The authority boundary defines scope: what is allowed, what is not allowed, where the limits are. Without an explicit authority boundary, autonomy drifts. It does not fail noisily. It accumulates small expansions of scope that individually appear reasonable and collectively produce a system behaving outside its intended parameters.

Delegation gate

Discovery is not the same as authority. The delegation gate is where authority is granted, not assumed. In a multi-agent system, this is where agents advertise capability and supervisors evaluate trust and scope before assigning tasks. This is capability-based delegation, not deterministic routing. The system determines who is allowed to decide, not just what happens next.

Override channel

Autonomy without interruptibility is not autonomy. It is risk. The override channel must be designed before it is needed. When it is only introduced after a failure, it is not a governance mechanism. It is a recovery mechanism, which operates too late and at too high a cost.

Telemetry and reflection

Authority that cannot be observed cannot be governed. Telemetry must capture not just outcomes but decision patterns; the structure of what the system chose, under what conditions, over time. This is the feedback mechanism that allows authority boundaries to evolve without requiring the system to be redesigned from scratch. Without it, autonomy cannot stabilize. It becomes drift.

Figure 4: Control surfaces define where authority enters, is constrained, can be interrupted, and is observed. These are governance properties, not execution properties.

Layered supervision at scale

Once authority is understood as a structural property rather than a permission setting, the question of scale becomes clearer. Scale is not about adding more agents. It is about composing supervision correctly.

At the foundation sit specialized agents: narrow in scope, task-oriented, bounded in authority. They execute atomic capabilities. They do not coordinate the system. They perform work.

Above them sit modular supervisors. These interpret intent, assess capability, and delegate authority. They are not micromanaging steps. They are allocating responsibility. This is where the arbitration and evaluation patterns live. This layer defines who is allowed to decide.

At scale, supervision itself must adapt. Supervisors compose with other supervisors. Authority becomes layered. Governance becomes hierarchical, but not centralized. Control is distributed, but bounded. No single node governs the entire system. Each supervisory domain negotiates laterally with others.

The critical implication is this: governance must be composed before agents are scaled. If the supervisory layer is weak, scaling agents does not improve the system. It amplifies its instability. As Article 6: Bounded Interaction, establishes, more capable agents do not stabilize an unbounded system. They accelerate it.

Stable autonomy is engineered, not emergent. Compose supervision before scaling agents.

Five design principles for supervisory autonomy

The structural argument above resolves into a set of design principles that apply to any system in which autonomous components act on behalf of human intent.

Bound authority explicitly. Every agent and supervisor must know what it can do, what it cannot do, and when escalation is required. Autonomy without boundaries becomes drift. This is not a policy. It is an encoding.

Separate federation from delegation. The ability to discover a capability is not the same as the authority to use it. Federation is topology. Delegation is governance. Conflating them produces systems where capability expands without corresponding authority constraints.

Make override paths intentional. Human intervention should be designed, not injected reactively after failure. The override channel is a first-class architectural element, not an emergency procedure.

Instrument reflection and telemetry. Autonomy requires observability. Telemetry is not logging; it is feedback for evolution. The system must measure its own decision patterns, not just its outcomes.

Compose supervision before scaling agents. Governance must precede scale. A supervisory architecture that is not in place before agents proliferate cannot be retrofitted afterward without redesigning the system.

What the talk covers

The Evolution of Automation talk, embedded below, provides a compressed version of this argument as it was delivered live. It covers the structural evolution from scripts to orchestration to agentic systems, makes the authority problem concrete through the refund–fraud conflict scenario, and walks through the orchestrator-to-supervisor transition and the control surfaces model.

Reading this paper first gives the full structural argument. The talk then provides the live version: a faster, more direct pass through the same terrain, useful for those who want the argument delivered rather than developed.

This companion paper is part of the Architecting Autonomy series. It accompanies Articles 5: Representation vs Interaction, 6: Bounded Interaction, and 7: When Boundaries Must Decide.

The payment-agent-vs-fraud-agent conflict scenario is a clean illustration of why orchestration alone doesn't scale - you end up with two locally correct decisions creating a globally broken state. Bounded authority and explicit delegation roles are the pieces most agent builders skip because they feel like overhead until the first production incident.

Running an agent that spends real money taught me this the hard way - the cost of no governance shows up fast when the agent acts autonomously on your behalf. Documented the ROI side of that experiment here: https://thoughts.jock.pl/p/project-money-ai-agent-value-creation-experiment-2026