Autonomy Is a Control Problem, Not a Trust Problem

Why governance fails without feedback, assurance, and stability by design

Editor’s note: This essay reframes governance as a control problem; arguing that autonomy demands feedback-stabilized architecture, not scaled human supervision.

Governance is not failing because organisations don’t care about risk. It is failing because we keep applying point-in-time oversight to systems that operate continuously. Reviews, audits, and approvals assume a world where behavior can be paused, observed, and corrected after the fact. Modern systems don’t wait. They act at machine speed, across fragmented contexts, long before human oversight can engage. In that environment, governance doesn’t collapse, it simply becomes irrelevant. What’s missing isn’t better policy or more dashboards. It’s a control model that matches the dynamics of the systems we’ve already built.

Governance Isn’t Failing: It’s Open-Loop

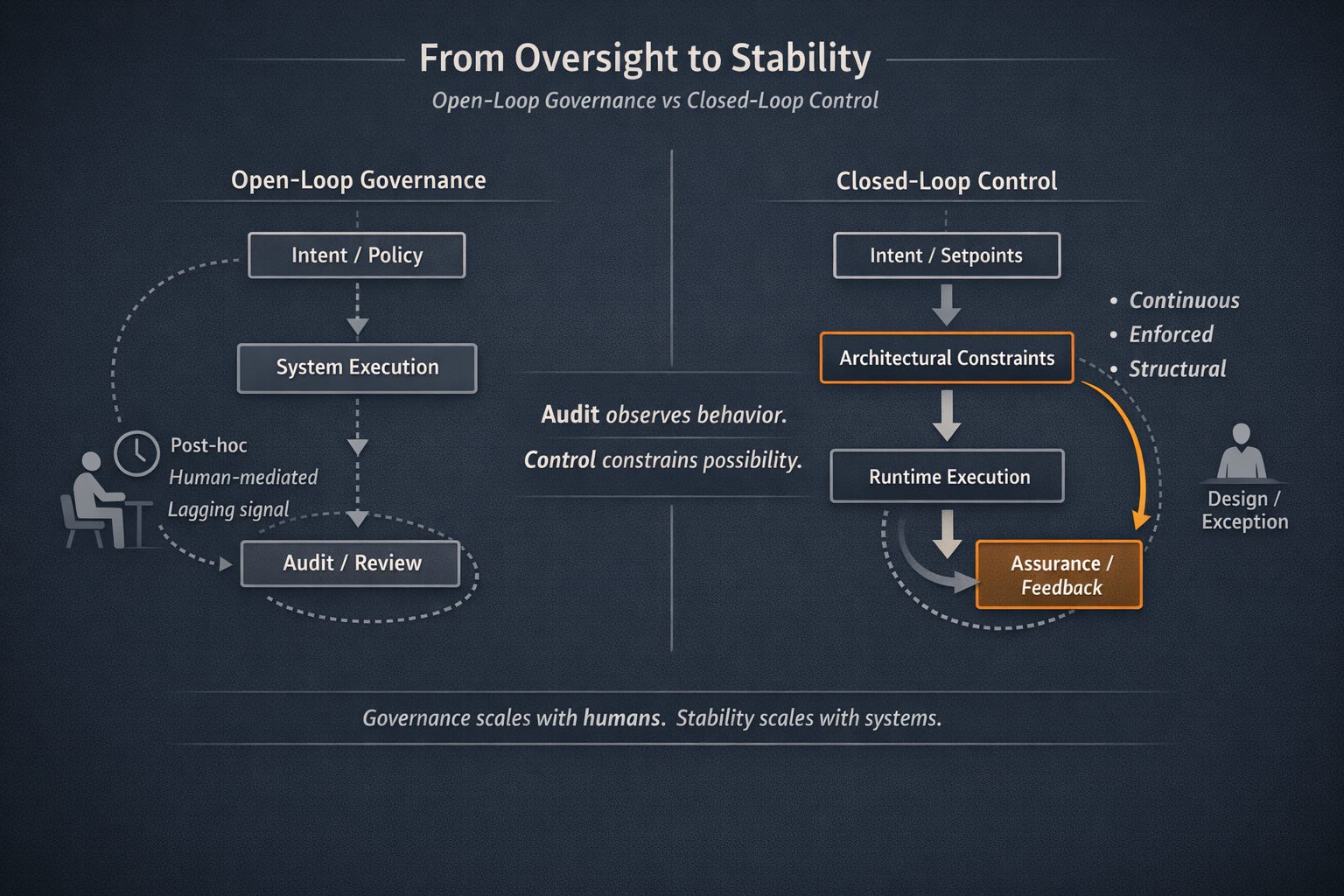

Most governance models operate as open-loop systems. Intent is defined through policy, standards, or guardrails. Execution proceeds independently. Behavior is observed later through logs, audits, or reviews. Correction happens after the fact, often manually, and usually after impact has already occurred.

That model assumes time, stability, and human availability. None of those assumptions hold in modern systems.

As systems scale, decisions fragment across services, agents, and automation layers. Action routinely precedes oversight. By the time governance engages, the system has already moved on. This isn’t a failure of discipline or intent, it’s a mismatch between the control model and the system’s operating reality.

Governance didn’t break. The environment outgrew it.

Trust Is Not a Property You Observe

Much of governance language revolves around trust: trusted systems, trusted actors, trusted decisions. But observation does not create trust. It merely detects behavior.

Audits tell us what happened. Reviews explain why. Dashboards visualize impact. None of these constrain what the system is allowed to do next.

In mature systems, trust is not inferred from good behavior. It is designed through enforced limits. What matters is not whether a system behaved correctly in the past, but whether it is structurally capable of behaving incorrectly in the future.

Trust that depends on inspection is already fragile. Trust that depends on structure scales.

Control Systems: Why Feedback Changes Everything

This is where control systems offer a more useful framing.

In an open-loop system, action proceeds without reference to outcome. In a closed-loop system, action is continuously adjusted based on feedback relative to explicit constraints or goals. The difference is not subtle. Open-loop systems rely on vigilance. Closed-loop systems rely on structure.

In mature engineered systems, trust does not come from observation. It comes from enforced constraints. Setpoints define what “acceptable” looks like. Control surfaces define how the system can act. Feedback loops ensure deviation is detected and corrected automatically, not escalated for routine human intervention.

This is the shift governance needs to make.

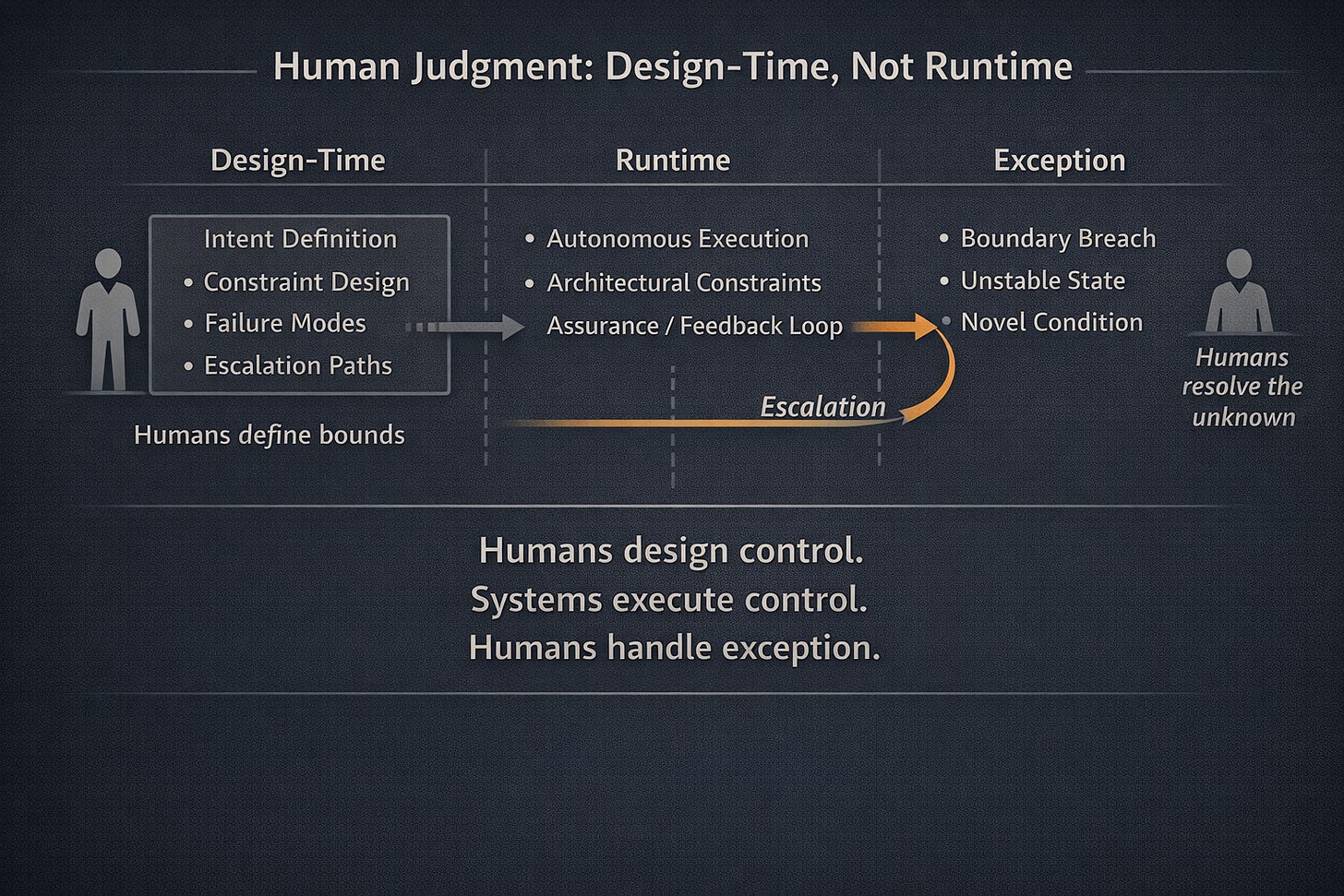

When governance is framed as a control problem, authority becomes enforceable rather than advisory. The system cannot take actions outside its designed bounds because those actions are structurally impossible. Assurance becomes continuous rather than periodic. Humans move out of the steady-state path and into design and exception handling, where they belong.

Seen this way, assurance is not something that happens after execution. It is the mechanism that keeps execution aligned in real time.

Assurance Is the Control Loop, Not the Audit

Once governance is understood as a control problem, the role of assurance changes fundamentally. Assurance is no longer a downstream activity that validates compliance after the fact. It becomes the feedback loop that binds intent, architecture, and runtime behavior together.

In a closed-loop system, feedback must be immediate, authoritative, and enforceable. This is where configuration matters. Configuration is not an implementation detail; it is the executable expression of governance intent. What the system is allowed to do, what it cannot do, and how it responds under stress are encoded here.

Effective assurance continuously verifies that architectural and technical controls remain present, correctly configured, and functioning. Drift is treated as deviation from setpoints, not as a compliance failure discovered later. Correction happens automatically or predictably, not through manual escalation.

This is the difference between being compliant by declaration and compliant by construction.

In many organisations, configuration has already become the de facto source of truth, while governance documentation trails behind it. When configuration, implementation, and assurance are managed as separate concerns, gaps appear. Those gaps are where risk accumulates.

Closed-loop assurance collapses that separation. Governance intent defines constraints. Architecture encodes them. Assurance continuously validates their presence and behavior at runtime. The loop is tight enough that deviation cannot persist unnoticed or uncorrected.

This is why human-in-the-loop models struggle at scale. Humans are excellent at defining bounds and interpreting exceptions. They are not suited to continuous enforcement. When humans sit in the steady-state control path, systems slow or fail open. When they operate at design time and exception boundaries, systems stabilize.

Governance, in this model, is no longer a lagging signal. It becomes a living property of the system: continuously asserted, continuously enforced, and resilient under scale.

Autonomy Demands Stability, Not Supervision

Autonomy is not a future state introduced by AI. It is an existing condition amplified by speed, scale, and machine execution. Modern systems already act independently, across fragmented contexts, long before human oversight can intervene. AI simply makes that reality impossible to ignore.

This is why supervision-based governance models collapse under autonomous systems. They assume action can be paused, reviewed, and corrected by people. Autonomous systems do not wait. They sense, decide, and act continuously. In that environment, placing humans in the steady-state control path is not a safeguard, it is a bottleneck.

Stability, not supervision, is the governing constraint.

In autonomous systems, governance must exist ahead of cognition, not around it. Authority, scope, and escalation paths must be enforced as structural constraints, not trusted as behavioral expectations. What matters is not whether an agent can explain its decision after the fact, but whether it was ever permitted to make that decision in the first place.

This reframes how safety, trust, and responsibility are achieved in AI systems. Trust does not come from transparency alone. It comes from bounded capability. Safety does not emerge from human review at scale. It emerges from feedback-stabilized architectures that continuously enforce intent at runtime.

Human involvement does not disappear in this model, it moves. Humans design the bounds, define the failure modes, and intervene when systems cannot stabilize themselves. They are architects of control, not supervisors of every action.

From Supervision to Stability

Autonomy does not break governance. It exposes whether governance was ever designed to control the system at all. In environments where systems sense, decide, and act continuously, oversight after execution is no longer a safeguard, it is a delay. Stability is the constraint autonomy introduces, and stability cannot be supervised into existence.

It has to be designed.

When governance is encoded as enforceable structure, assurance becomes continuous, architecture becomes the control surface, and trust stops being something we audit after the fact. It becomes a property of what the system is allowed to do. This is the shift autonomy demands: from observation to control, from supervision to stability, and from governance as intent to governance as executable reality.